DWQ

Collection

A collection of DWQ models for Apple Silicon

•

2 items

•

Updated

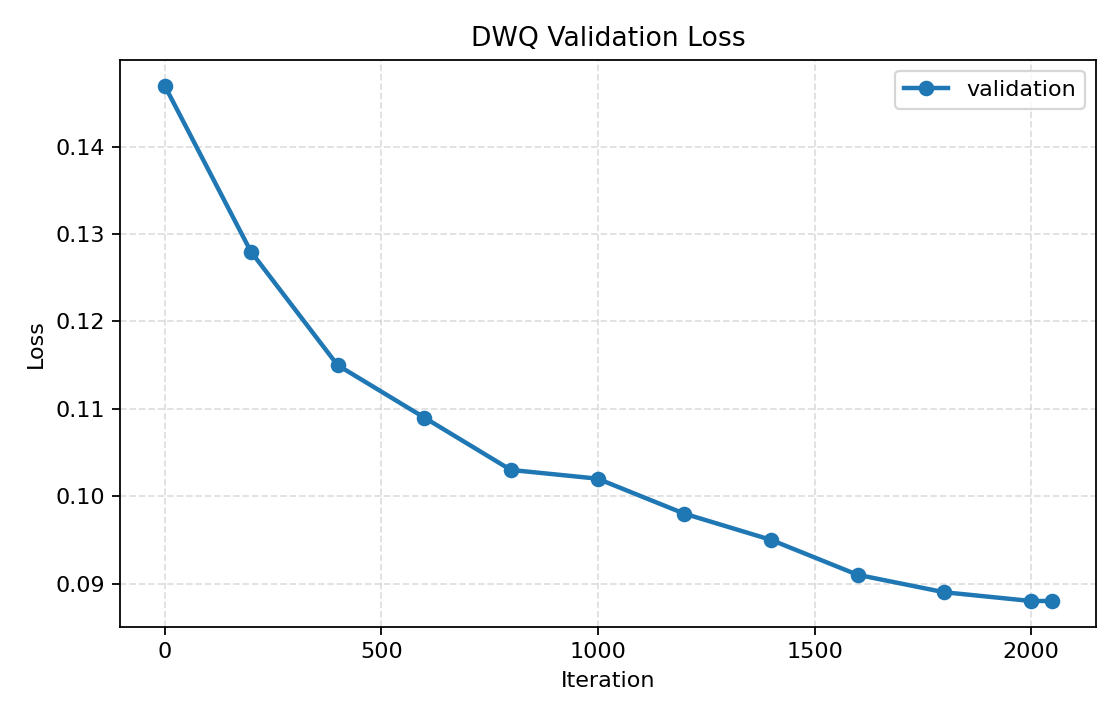

This model was quantized to 3-bit using DWQ with mlx-lm version 0.28.4.

| Parameter | Value |

|---|---|

| DWQ learning rate | 3e-7 |

| Batch size | 1 |

| Dataset | allenai/tulu-3-sft-mixture |

| Initial validation loss | 0.146 |

| Final validation loss | 0.088 |

| Relative KL reduction | ≈40 % |

| Tokens processed | ≈1.09 M |

pip install mlx-lm

from mlx_lm import load, generate

model, tokenizer = load("catalystsec/MiniMax-M2-3bit-DWQ")

prompt = "hello"

if tokenizer.chat_template is not None:

prompt = tokenizer.apply_chat_template(

[{"role": "user", "content": prompt}],

add_generation_prompt=True,

)

response = generate(model, tokenizer, prompt=prompt, verbose=True)

print(response)

Base model

MiniMaxAI/MiniMax-M2