[BENCHMARKS coming soon...]

Qwen3-VL-12B-Thinking-Brainstorm20x

This repo contains the full precision source code, in "safe tensors" format to generate GGUFs, GPTQ, EXL2, AWQ, HQQ and other formats. The source code can also be used directly.

This model contains the full source of the original "Qwen3-VL-Thinking-8B" coupled with Brainstorm 20x adapter (by DavidAU).

This is the full image/text/multi-modal model AND fully tested.

Brainstorm 20x will augment text generation as well as "image" description and in many cases raises the raw benchmarks of the model too.

Brainstorm also augments reasoning/thinking too.

Addition of the Brainstorm adapter has altered the model from 8B to 12B (now with 55 layers and 608 tensors).

IMPORTANT-GGUF QUANTS:

You need both the GGUF quant(s) AND the special "mmproj" gguf quant (Q8,F16,BF16 or F32) to use all functions with this model.

SPECIALIZED MAX GGUF QUANTS:

https://huggingface.co/DavidAU/Qwen3-VL-12B-Thinking-Brainstorm20x-NEO-MAX-GGUF

MODEL DETAILS:

(Quants, BENCHMARKS, Brainstorm details, org model details from Qwen, and then help section)

This model requires:

- Jinja (embedded) or CHATML template

- Max context of 256k.

Settings used for testing (suggested):

- Temp .3 to .7 (but .8 to 1.5 for creative)

- Rep pen 1.05 to 1.1

- Topp .8 , minp .05

- Topk 20

- Min context of 8k for thinking / output.

- No system prompt.

This model will respond well to both detailed instructions and step by step refinement and additions to code.

Likewise for creative use cases.

As this is an instruct model, it will also benefit from a detailed system prompt too.

For simpler coding problems, lower quants will work well; but for complex/multi-step problem solving suggest Q6 or Q8.

QUANTS:

GGUF? GGUF Imatrix? Other?

Special thanks to Team Mradermacher, Team Nightmedia and other quanters!

See under "model tree", upper right and click on "quantizations".

New quants will automatically appear.

BENCHMARKS by Nightmedia (also makes MLX quants too)

https://huggingface.co/nightmedia/

- coming soon -

What is Brainstorm?

Brainstorm 20x

The BRAINSTORM process was developed by David_AU.

Some of the core principals behind this process are discussed in this scientific paper : Progressive LLaMA with Block Expansion .

However I went in a completely different direction from what was outlined in this paper.

What is "Brainstorm" ?

The reasoning center of an LLM is taken apart, reassembled, and expanded.

In this case for this model: 20 times

Then these centers are individually calibrated. These "centers" also interact with each other. This introduces subtle changes into the reasoning process. The calibrations further adjust - dial up or down - these "changes" further. The number of centers (5x,10x etc) allow more "tuning points" to further customize how the model reasons so to speak.

The core aim of this process is to increase the model's detail, concept and connection to the "world", general concept connections, prose quality and prose length without affecting instruction following.

This will also enhance any creative use case(s) of any kind, including "brainstorming", creative art form(s) and like case uses.

Here are some of the enhancements this process brings to the model's performance:

- Prose generation seems more focused on the moment to moment.

- Sometimes there will be "preamble" and/or foreshadowing present.

- Fewer or no "cliches"

- Better overall prose and/or more complex / nuanced prose.

- A greater sense of nuance on all levels.

- Coherence is stronger.

- Description is more detailed, and connected closer to the content.

- Simile and Metaphors are stronger and better connected to the prose, story, and character.

- Sense of "there" / in the moment is enhanced.

- Details are more vivid, and there are more of them.

- Prose generation length can be long to extreme.

- Emotional engagement is stronger.

- The model will take FEWER liberties vs a normal model: It will follow directives more closely but will "guess" less.

- The MORE instructions and/or details you provide the more strongly the model will respond.

- Depending on the model "voice" may be more "human" vs original model's "voice".

Other "lab" observations:

- This process does not, in my opinion, make the model 5x or 10x "smarter" - if only that was true!

- However, a change in "IQ" was not an issue / a priority, and was not tested or calibrated for so to speak.

- From lab testing it seems to ponder, and consider more carefully roughly speaking.

- You could say this process sharpens the model's focus on it's task(s) at a deeper level.

The process to modify the model occurs at the root level - source files level. The model can quanted as a GGUF, EXL2, AWQ etc etc.

Qwen3-VL-8B-Thinking

Meet Qwen3-VL — the most powerful vision-language model in the Qwen series to date.

This generation delivers comprehensive upgrades across the board: superior text understanding & generation, deeper visual perception & reasoning, extended context length, enhanced spatial and video dynamics comprehension, and stronger agent interaction capabilities.

Available in Dense and MoE architectures that scale from edge to cloud, with Instruct and reasoning‑enhanced Thinking editions for flexible, on‑demand deployment.

Key Enhancements:

Visual Agent: Operates PC/mobile GUIs—recognizes elements, understands functions, invokes tools, completes tasks.

Visual Coding Boost: Generates Draw.io/HTML/CSS/JS from images/videos.

Advanced Spatial Perception: Judges object positions, viewpoints, and occlusions; provides stronger 2D grounding and enables 3D grounding for spatial reasoning and embodied AI.

Long Context & Video Understanding: Native 256K context, expandable to 1M; handles books and hours-long video with full recall and second-level indexing.

Enhanced Multimodal Reasoning: Excels in STEM/Math—causal analysis and logical, evidence-based answers.

Upgraded Visual Recognition: Broader, higher-quality pretraining is able to “recognize everything”—celebrities, anime, products, landmarks, flora/fauna, etc.

Expanded OCR: Supports 32 languages (up from 19); robust in low light, blur, and tilt; better with rare/ancient characters and jargon; improved long-document structure parsing.

Text Understanding on par with pure LLMs: Seamless text–vision fusion for lossless, unified comprehension.

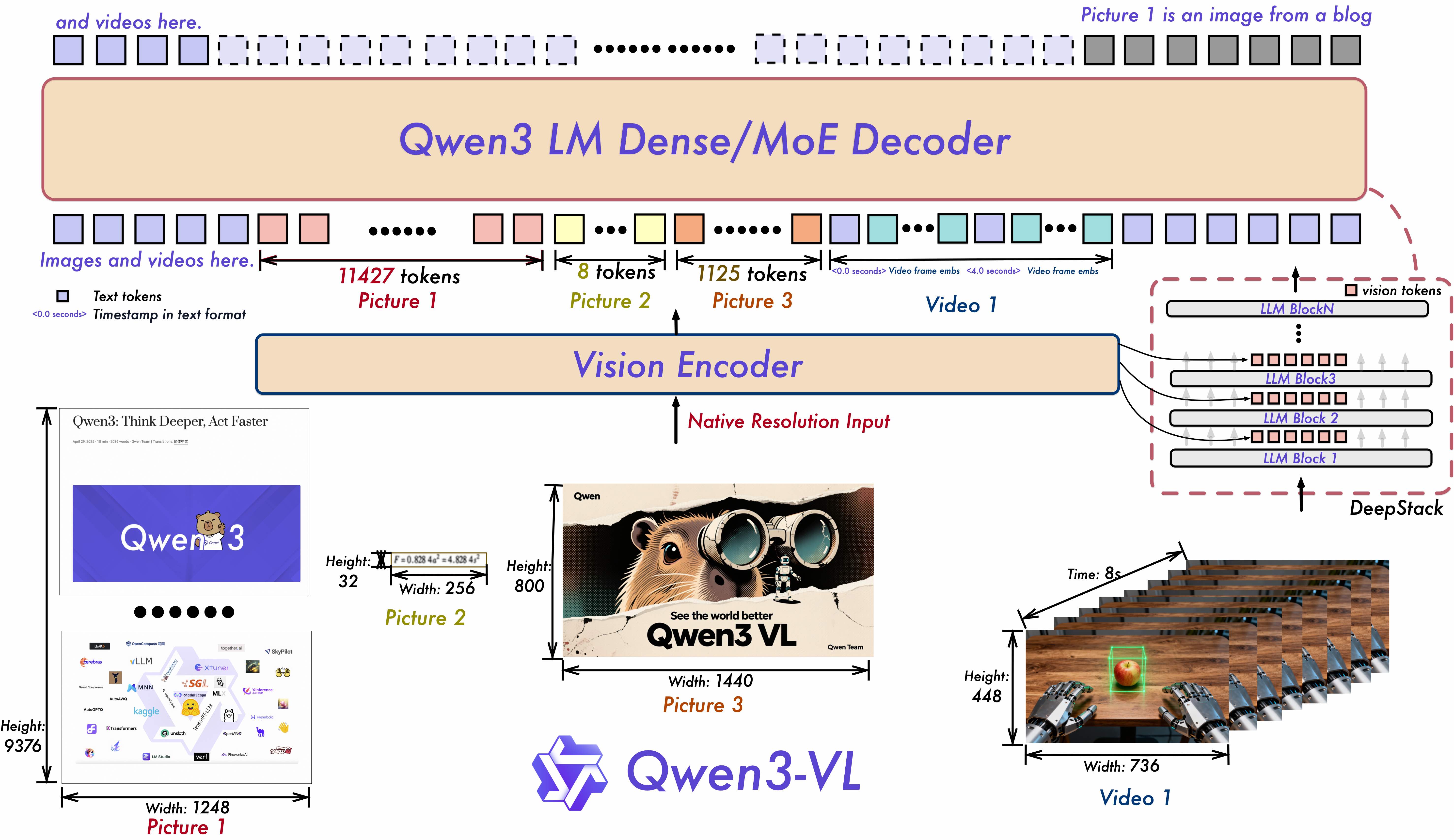

Model Architecture Updates:

Interleaved-MRoPE: Full‑frequency allocation over time, width, and height via robust positional embeddings, enhancing long‑horizon video reasoning.

DeepStack: Fuses multi‑level ViT features to capture fine‑grained details and sharpen image–text alignment.

Text–Timestamp Alignment: Moves beyond T‑RoPE to precise, timestamp‑grounded event localization for stronger video temporal modeling.

This is the weight repository for Qwen3-VL-8B-Thinking.

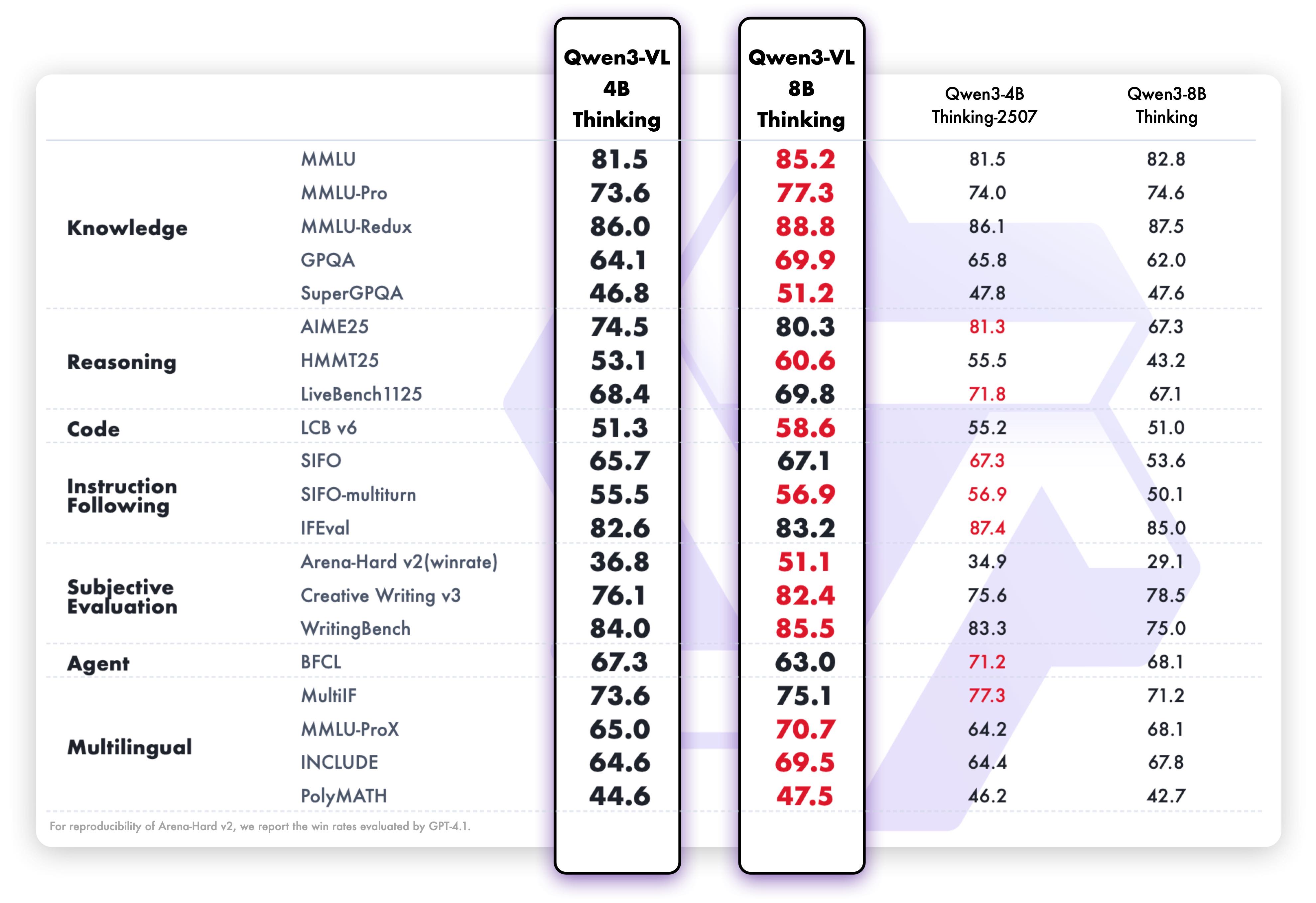

Model Performance

Multimodal performance

Quickstart

Below, we provide simple examples to show how to use Qwen3-VL with 🤖 ModelScope and 🤗 Transformers.

The code of Qwen3-VL has been in the latest Hugging face transformers and we advise you to build from source with command:

pip install git+https://github.com/huggingface/transformers

# pip install transformers==4.57.0 # currently, V4.57.0 is not released

Using 🤗 Transformers to Chat

Here we show a code snippet to show you how to use the chat model with transformers:

from transformers import Qwen3VLForConditionalGeneration, AutoProcessor

# default: Load the model on the available device(s)

model = Qwen3VLForConditionalGeneration.from_pretrained(

"Qwen/Qwen3-VL-8B-Thinking", dtype="auto", device_map="auto"

)

# We recommend enabling flash_attention_2 for better acceleration and memory saving, especially in multi-image and video scenarios.

# model = Qwen3VLForConditionalGeneration.from_pretrained(

# "Qwen/Qwen3-VL-8B-Thinking",

# dtype=torch.bfloat16,

# attn_implementation="flash_attention_2",

# device_map="auto",

# )

processor = AutoProcessor.from_pretrained("Qwen/Qwen3-VL-8B-Thinking")

messages = [

{

"role": "user",

"content": [

{

"type": "image",

"image": "https://qianwen-res.oss-cn-beijing.aliyuncs.com/Qwen-VL/assets/demo.jpeg",

},

{"type": "text", "text": "Describe this image."},

],

}

]

# Preparation for inference

inputs = processor.apply_chat_template(

messages,

tokenize=True,

add_generation_prompt=True,

return_dict=True,

return_tensors="pt"

)

inputs = inputs.to(model.device)

# Inference: Generation of the output

generated_ids = model.generate(**inputs, max_new_tokens=128)

generated_ids_trimmed = [

out_ids[len(in_ids) :] for in_ids, out_ids in zip(inputs.input_ids, generated_ids)

]

output_text = processor.batch_decode(

generated_ids_trimmed, skip_special_tokens=True, clean_up_tokenization_spaces=False

)

print(output_text)

Generation Hyperparameters

VL

export greedy='false'

export top_p=0.95

export top_k=20

export repetition_penalty=1.0

export presence_penalty=0.0

export temperature=1.0

export out_seq_length=40960

Text

export greedy='false'

export top_p=0.95

export top_k=20

export repetition_penalty=1.0

export presence_penalty=1.5

export temperature=1.0

export out_seq_length=32768 (for aime, lcb, and gpqa, it is recommended to set to 81920)

Help, Adjustments, Samplers, Parameters and More

CHANGE THE NUMBER OF ACTIVE EXPERTS:

See this document:

https://huggingface.co/DavidAU/How-To-Set-and-Manage-MOE-Mix-of-Experts-Model-Activation-of-Experts

Settings: CHAT / ROLEPLAY and/or SMOOTHER operation of this model:

In "KoboldCpp" or "oobabooga/text-generation-webui" or "Silly Tavern" ;

Set the "Smoothing_factor" to 1.5

: in KoboldCpp -> Settings->Samplers->Advanced-> "Smooth_F"

: in text-generation-webui -> parameters -> lower right.

: In Silly Tavern this is called: "Smoothing"

NOTE: For "text-generation-webui"

-> if using GGUFs you need to use "llama_HF" (which involves downloading some config files from the SOURCE version of this model)

Source versions (and config files) of my models are here:

OTHER OPTIONS:

Increase rep pen to 1.1 to 1.15 (you don't need to do this if you use "smoothing_factor")

If the interface/program you are using to run AI MODELS supports "Quadratic Sampling" ("smoothing") just make the adjustment as noted.

Highest Quality Settings / Optimal Operation Guide / Parameters and Samplers

This a "Class 1" model:

For all settings used for this model (including specifics for its "class"), including example generation(s) and for advanced settings guide (which many times addresses any model issue(s)), including methods to improve model performance for all use case(s) as well as chat, roleplay and other use case(s) please see:

You can see all parameters used for generation, in addition to advanced parameters and samplers to get the most out of this model here:

- Downloads last month

- 38